Manipulating, analyzing and exporting data with tidyverse

Data Carpentry contributors

Manipulating and analyzing data with dplyr

Learning Objectives

- Describe the purpose of the

dplyrandtidyrpackages.- Select certain columns in a data frame with the

dplyrfunctionselect.- Extract certain rows in a data frame according to logical (boolean) conditions with the

dplyrfunctionfilter.- Link the output of one

dplyrfunction to the input of another function with the ‘pipe’ operator%>%.- Add new columns to a data frame that are functions of existing columns with

mutate.- Use the split-apply-combine concept for data analysis.

- Use

summarize,group_by, andcountto split a data frame into groups of observations, apply summary statistics for each group, and then combine the results.- Describe the concept of a wide and a long table format and for which purpose those formats are useful.

- Describe what key-value pairs are.

- Reshape a data frame from long to wide format and back with the

spreadandgathercommands from thetidyrpackage.- Export a data frame to a .csv file.

Data manipulation using dplyr and tidyr

Bracket subsetting is handy, but it can be cumbersome and difficult to read, especially for complicated operations. Enter dplyr. dplyr is a package for helping with tabular data manipulation. It pairs nicely with tidyr which enables you to swiftly convert between different data formats for plotting and analysis.

The tidyverse package is an “umbrella-package” that installs tidyr, dplyr, and several other useful packages for data analysis, such as ggplot2, tibble, etc.

The tidyverse package tries to address 3 common issues that arise when doing data analysis in R:

- The results from a base R function sometimes depend on the type of data.

- R expressions are used in a non standard way, which can be confusing for new learners.

- The existence of hidden arguments having default operations that new learners are not aware of.

You should already have installed and loaded the tidyverse package. If you haven’t already done so, you can type install.packages("tidyverse") straight into the console. Then, type library(tidyverse) to load the package.

What are dplyr and tidyr?

The package dplyr provides helper tools for the most common data manipulation tasks. It is built to work directly with data frames, with many common tasks optimized by being written in a compiled language (C++). An additional feature is the ability to work directly with data stored in an external database. The benefits of doing this are that the data can be managed natively in a relational database, queries can be conducted on that database, and only the results of the query are returned.

This addresses a common problem with R in that all operations are conducted in-memory and thus the amount of data you can work with is limited by available memory. The database connections essentially remove that limitation in that you can connect to a database of many hundreds of GB, conduct queries on it directly, and pull back into R only what you need for analysis.

The package tidyr addresses the common problem of wanting to reshape your data for plotting and usage by different R functions. For example, sometimes we want data sets where we have one row per measurement. Other times we want a data frame where each measurement type has its own column, and rows are instead more aggregated groups (e.g., a time period, an experimental unit like a plot or a batch number). Moving back and forth between these formats is non-trivial, and tidyr gives you tools for this and more sophisticated data manipulation.

To learn more about dplyr and tidyr after the workshop, you may want to check out this handy data transformation with dplyr cheatsheet and this one about tidyr.

As before, we’ll read in our data using the read_csv() function from the tidyverse package readr.

#> New names:

#> Rows: 34786 Columns: 14

#> ── Column specification

#> ──────────────────────────────────────────────────────── Delimiter: "," chr

#> (6): period, diagnostic, decoration_type, ceramic_type, manufacture_tech... dbl

#> (8): ...1, record_id, month, day, year, plot_id, length, diameter

#> ℹ Use `spec()` to retrieve the full column specification for this data. ℹ

#> Specify the column types or set `show_col_types = FALSE` to quiet this message.

#> • `` -> `...1`Next, we’re going to learn some of the most common dplyr functions:

select(): subset columnsfilter(): subset rows on conditionsmutate(): create new columns by using information from other columnsgroup_by()andsummarize(): create summary statistics on grouped dataarrange(): sort resultscount(): count discrete values

Selecting columns and filtering rows

To select columns of a data frame, use select(). The first argument to this function is the data frame (surveys), and the subsequent arguments are the columns to keep.

To select all columns except certain ones, put a “-” in front of the variable to exclude it.

This will select all the variables in surveys except record_id and period.

To choose rows based on a specific criterion, use filter():

Pipes

What if you want to select and filter at the same time? There are three ways to do this: use intermediate steps, nested functions, or pipes.

With intermediate steps, you create a temporary data frame and use that as input to the next function, like this:

surveys2 <- filter(surveys, diameter < 5)

surveys_sml <- select(surveys2, period, diagnostic, diameter)This is readable, but can clutter up your workspace with lots of objects that you have to name individually. With multiple steps, that can be hard to keep track of.

You can also nest functions (i.e. one function inside of another), like this:

This is handy, but can be difficult to read if too many functions are nested, as R evaluates the expression from the inside out (in this case, filtering, then selecting).

The last option, pipes, are a recent addition to R. Pipes let you take the output of one function and send it directly to the next, which is useful when you need to do many things to the same dataset. Pipes in R look like %>% and are made available via the magrittr package, installed automatically with dplyr. If you use RStudio, you can type the pipe with Ctrl + Shift + M if you have a PC or Cmd + Shift + M if you have a Mac.

In the above code, we use the pipe to send the surveys dataset first through filter() to keep rows where diameter is less than 5, then through select() to keep only the period, diagnostic, and diameter columns. Since %>% takes the object on its left and passes it as the first argument to the function on its right, we don’t need to explicitly include the data frame as an argument to the filter() and select() functions any more.

Some may find it helpful to read the pipe like the word “then.” For instance, in the example above, we took the data frame surveys, then we filtered for rows with diameter < 5, then we selected columns period, diagnostic, and diameter. The dplyr functions by themselves are somewhat simple, but by combining them into linear workflows with the pipe we can accomplish more complex manipulations of data frames.

If we want to create a new object with this smaller version of the data, we can assign it a new name:

surveys_sml <- surveys %>%

filter(diameter < 5) %>%

select(period, diagnostic, diameter)

surveys_smlNote that the final data frame is the leftmost part of this expression.

Challenge

Using pipes, subset the

surveysdata to include artefacts collected before 1995 and retain only the columnsyear,diagnostic, anddiameter.

Mutate

Frequently you’ll want to create new columns based on the values in existing columns, for example to do unit conversions, or to find the ratio of values in two columns. For this we’ll use mutate().

To create a new column of diameter in cm:

You can also create a second new column based on the first new column within the same call of mutate():

If this runs off your screen and you just want to see the first few rows, you can use a pipe to view the head() of the data. (Pipes work with non-dplyr functions, too, as long as the dplyr or magrittr package is loaded).

The first few rows of the output are full of NAs, so if we wanted to remove those we could insert a filter() in the chain:

is.na() is a function that determines whether something is an NA. The ! symbol negates the result, so we’re asking for every row where diameter is not an NA.

Challenge

Create a new data frame from the

surveysdata that meets the following criteria: contains only theperiodcolumn and a new column calledlength_cmcontaining thelengthvalues (currently in mm) converted to centimeters. In thislength_cmcolumn, there are noNAs and all values are less than 3.Hint: think about how the commands should be ordered to produce this data frame!

Split-apply-combine data analysis and the summarize() function

Many data analysis tasks can be approached using the split-apply-combine paradigm: split the data into groups, apply some analysis to each group, and then combine the results. Key function of dplyr for this workflow are group_by() and summarize().

The group_by() and summarize() functions

group_by() is often used together with summarize(), which collapses each group into a single-row summary of that group. group_by() takes as arguments the column names that contain the categorical variables for which you want to calculate the summary statistics. So to compute the mean diameter by diagnostic:

You may also have noticed that the output from these calls doesn’t run off the screen anymore. It’s one of the advantages of tbl_df over data frame.

You can also group by multiple columns:

surveys %>%

group_by(diagnostic, period) %>%

summarize(mean_diameter = mean(diameter, na.rm = TRUE)) %>%

tail()#> `summarise()` has grouped output by 'diagnostic'. You can override using the

#> `.groups` argument.Here, we used tail() to look at the last six rows of our summary. Before, we had used head() to look at the first six rows. We can see that the diagnostic column contains NA values because some artefacts had escaped before their diagnostic and body diameters could be determined. The resulting mean_diameter column does not contain NA but NaN (which refers to “Not a Number”) because mean() was called on a vector of NA values while at the same time setting na.rm = TRUE. To avoid this, we can remove the missing values for diameter before we attempt to calculate the summary statistics on diameter. Because the missing values are removed first, we can omit na.rm = TRUE when computing the mean:

surveys %>%

filter(!is.na(diameter)) %>%

group_by(diagnostic, period) %>%

summarize(mean_diameter = mean(diameter))#> `summarise()` has grouped output by 'diagnostic'. You can override using the

#> `.groups` argument.Here, again, the output from these calls doesn’t run off the screen anymore. If you want to display more data, you can use the print() function at the end of your chain with the argument n specifying the number of rows to display:

surveys %>%

filter(!is.na(diameter)) %>%

group_by(diagnostic, period) %>%

summarize(mean_diameter = mean(diameter)) %>%

print(n = 15)#> `summarise()` has grouped output by 'diagnostic'. You can override using the

#> `.groups` argument.Once the data are grouped, you can also summarize multiple variables at the same time (and not necessarily on the same variable). For instance, we could add a column indicating the minimum diameter for each ceramic_type for each diagnostic:

surveys %>%

filter(!is.na(diameter)) %>%

group_by(diagnostic, period) %>%

summarize(mean_diameter = mean(diameter),

min_diameter = min(diameter))#> `summarise()` has grouped output by 'diagnostic'. You can override using the

#> `.groups` argument.(Note that the minimum values don’t look very sensible here: remember that our dataset isn’t a real one!)

It is sometimes useful to rearrange the result of a query to inspect the values. For instance, we can sort on min_diameter to put the smaller ceramic_type first:

surveys %>%

filter(!is.na(diameter)) %>%

group_by(diagnostic, period) %>%

summarize(mean_diameter = mean(diameter),

min_diameter = min(diameter)) %>%

arrange(min_diameter)#> `summarise()` has grouped output by 'diagnostic'. You can override using the

#> `.groups` argument.To sort in descending order, we need to add the desc() function. If we want to sort the results by decreasing order of mean diameter:

surveys %>%

filter(!is.na(diameter)) %>%

group_by(diagnostic, period) %>%

summarize(mean_diameter = mean(diameter),

min_diameter = min(diameter)) %>%

arrange(desc(mean_diameter))#> `summarise()` has grouped output by 'diagnostic'. You can override using the

#> `.groups` argument.Counting

When working with data, we often want to know the number of observations found for each factor or combination of factors. For this task, dplyr provides count(). For example, if we wanted to count the number of rows of data for each diagnostic, we would do:

The count() function is shorthand for something we’ve already seen: grouping by a variable, and summarizing it by counting the number of observations in that group. In other words, surveys %>% count() is equivalent to:

For convenience, count() provides the sort argument:

Previous example shows the use of count() to count the number of rows/observations for one factor (i.e., diagnostic). If we wanted to count combination of factors, such as diagnostic and ceramic_type, we would specify the first and the second factor as the arguments of count():

With the above code, we can proceed with arrange() to sort the table according to a number of criteria so that we have a better comparison. For instance, we might want to arrange the table above in (i) an alphabetical order of the levels of the ceramic_type and (ii) in descending order of the count:

From the table above, we may learn that, for instance, there are 75 observations of the Bevelled-Rim Bowl ceramic type that are not specified for its diagnostic (i.e. NA).

Challenge

- How many artefacts were found for each

recovery_method?

- Use

group_by()andsummarize()to find the mean, min, and max length for each ceramic_type (usingperiod). Also add the number of observations (hint: see?n).Answer

- What was the widest artefact measured in each year? Return the columns

year,decoration_type,period, anddiameter.

Reshaping with gather and spread

In the spreadsheet lesson, we discussed how to structure our data leading to the four rules defining a tidy dataset:

- Each variable has its own column

- Each observation has its own row

- Each value must have its own cell

- Each type of observational unit forms a table

Here we examine the fourth rule: Each type of observational unit forms a table.

In surveys, the rows of surveys contain the values of variables associated with each record (the unit), values such as the diameter or diagnostic of each artefact associated with each record. What if instead of comparing records, we wanted to compare the different mean diameter of each decoration type between plots? (Ignoring recovery_method for simplicity).

We’d need to create a new table where each row (the unit) is comprised of values of variables associated with each plot. In practical terms this means the values in decoration_type would become the names of column variables and the cells would contain the values of the mean diameter observed on each plot.

Having created a new table, it is therefore straightforward to explore the relationship between the diameter of different decoration_types within, and between, the plots. The key point here is that we are still following a tidy data structure, but we have reshaped the data according to the observations of interest: average decoration_type diameter per plot instead of recordings per date.

The opposite transformation would be to transform column names into values of a variable.

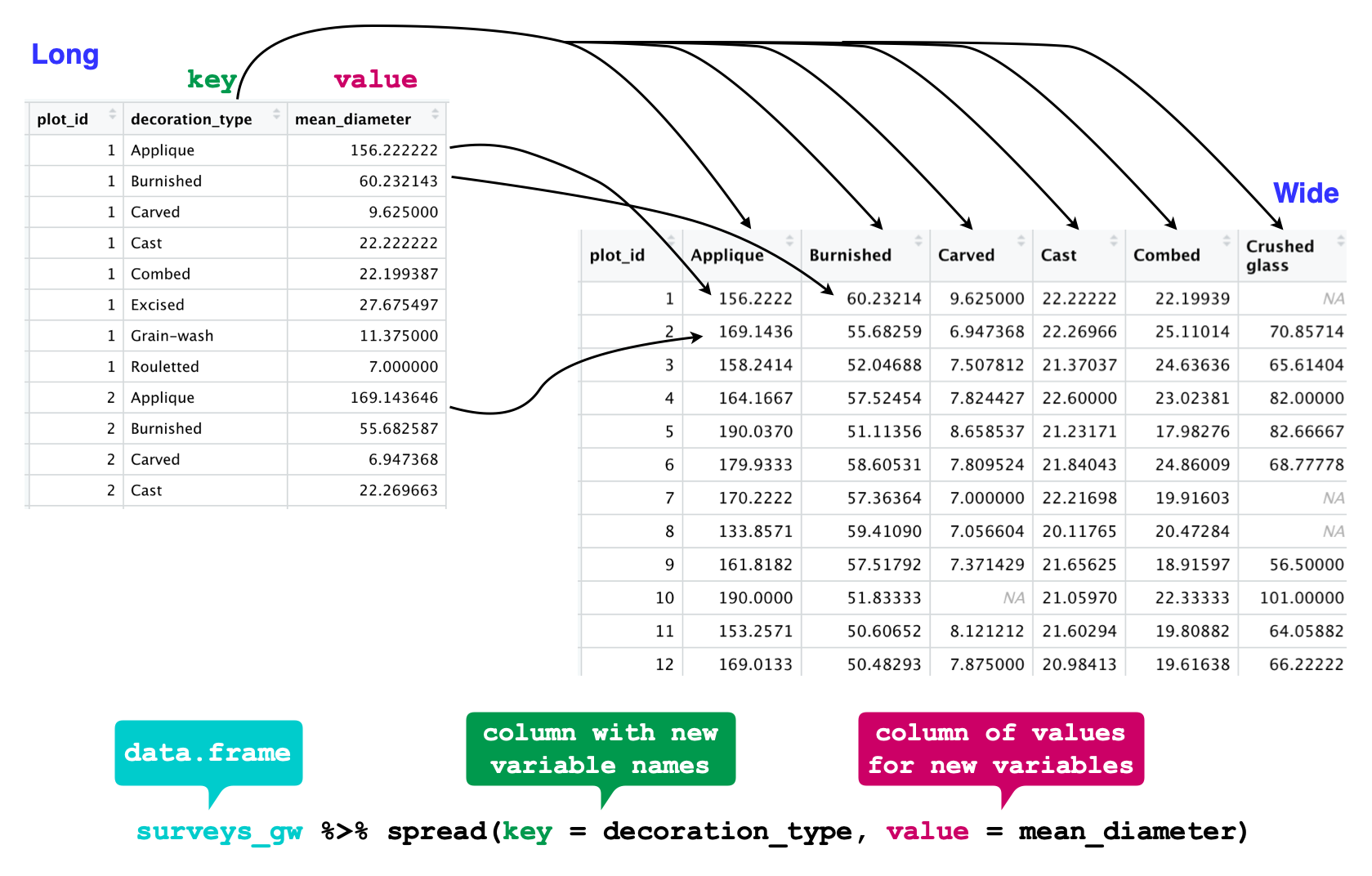

We can do both these of transformations with two tidyr functions, spread() and gather().

Spreading

spread() takes three principal arguments:

- the data

- the key column variable whose values will become new column names.

- the value column variable whose values will fill the new column variables.

Further arguments include fill which, if set, fills in missing values with the value provided.

Let’s use spread() to transform surveys to find the mean diameter of each decoration_type in each plot over the entire survey period. We use filter(), group_by() and summarise() to filter our observations and variables of interest, and create a new variable for the mean_diameter.

surveys_gw <- surveys %>%

filter(!is.na(diameter)) %>%

group_by(plot_id, decoration_type) %>%

summarize(mean_diameter = mean(diameter))#> `summarise()` has grouped output by 'plot_id'. You can override using the

#> `.groups` argument.This yields surveys_gw where the observations for each plot are spread across multiple rows, 196 observations of 3 variables. Using spread() to key on decoration_type with values from mean_diameter this becomes 24 observations of 11 variables, one row for each plot.

surveys_spread <- surveys_gw %>%

spread(key = decoration_type, value = mean_diameter)

str(surveys_spread)

We could now plot comparisons between the diameter of decoration types in different plots, although we may wish to fill in the missing values first.

Gathering

The opposing situation could occur if we had been provided with data in the form of surveys_spread, where the decoration_type names are column names, but we wish to treat them as values of a decoration_type variable instead.

In this situation we are gathering the column names and turning them into a pair of new variables. One variable represents the column names as values, and the other variable contains the values previously associated with the column names.

gather() takes four principal arguments:

- the data

- the key column variable we wish to create from column names.

- the values column variable we wish to create and fill with values associated with the key.

- the names of the columns we use to fill the key variable (or to drop).

To recreate surveys_gw from surveys_spread we would create a key called decoration_type and value called mean_diameter and use all columns except plot_id for the key variable. Here we exclude plot_id from being gather()ed.

surveys_gather <- surveys_spread %>%

gather(key = "decoration_type", value = "mean_diameter", -plot_id)

str(surveys_gather)

Note that now the NA decoration_types are included in the re-gathered format. Spreading and then gathering can be a useful way to balance out a dataset so every replicate has the same composition.

We could also have used a specification for what columns to include. This can be useful if you have a large number of identifying columns, and it allows you to type less in order to specify what to gather than what to leave alone. And if the columns are directly adjacent, we don’t even need to list them all out - instead you can use the : operator!

surveys_spread %>%

gather(key = "decoration_type", value = "mean_diameter", Rouletted:Glazed) %>%

head()Challenge

- Spread the

surveysdata frame withyearas columns,plot_idas rows, and the number of decoration types per plot as the values. You will need to summarize before reshaping, and use the functionn_distinct()to get the number of unique decoration_types within a particular chunk of data. It’s a powerful function! See?n_distinctfor more.Answer

surveys_spread_decoration_types <- surveys %>% group_by(plot_id, year) %>% summarize(n_decoration_types = n_distinct(decoration_type)) %>% spread(year, n_decoration_types)#> `summarise()` has grouped output by 'plot_id'. You can override using the #> `.groups` argument.

- Now take that data frame and

gather()it again, so each row is a uniqueplot_idbyyearcombination.

- The

surveysdata set has two measurement columns:lengthanddiameter. This makes it difficult to do things like look at the relationship between mean values of each measurement per year in different plot types. Let’s walk through a common solution for this type of problem. First, usegather()to create a dataset where we have a key column calledmeasurementand avaluecolumn that takes on the value of eitherlengthordiameter. Hint: You’ll need to specify which columns are being gathered.

- With this new data set, calculate the average of each

measurementin eachyearfor each differentrecovery_method. Thenspread()them into a data set with a column forlengthanddiameter. Hint: You only need to specify the key and value columns forspread().Answer

surveys_long %>% group_by(year, measurement, recovery_method) %>% summarize(mean_value = mean(value, na.rm=TRUE)) %>% spread(measurement, mean_value)#> `summarise()` has grouped output by 'year', 'measurement'. You can override #> using the `.groups` argument.

Exporting data

Now that you have learned how to use dplyr to extract information from or summarize your raw data, you may want to export these new data sets to share them with your collaborators or for archival.

Similar to the read_csv() function used for reading CSV files into R, there is a write_csv() function that generates CSV files from data frames.

Before using write_csv(), we are going to create a new folder, data, in our working directory that will store this generated dataset. We don’t want to write generated datasets in the same directory as our raw data. It’s good practice to keep them separate. The data_raw folder should only contain the raw, unaltered data, and should be left alone to make sure we don’t delete or modify it. In contrast, our script will generate the contents of the data directory, so even if the files it contains are deleted, we can always re-generate them.

In preparation for our next lesson on plotting, we are going to prepare a cleaned up version of the data set that doesn’t include any missing data.

Let’s start by removing observations of artefacts for which diameter and length are missing, or the diagnostic has not been determined:

surveys_complete <- surveys %>%

filter(!is.na(diameter), # remove missing diameter

!is.na(length), # remove missing length

!is.na(diagnostic)) # remove missing diagnosticBecause we are interested in plotting how ceramic type abundances have changed through time, we are also going to remove observations for rare ceramic_type (i.e., that have been observed less than 50 times). We will do this in two steps: first we are going to create a data set that counts how often each ceramic type has been observed, and filter out the rare ceramic type; then, we will extract only the observations for these more common ceramic type:

## Extract the most common period

ceramic_type_counts <- surveys_complete %>%

count(period) %>%

filter(n >= 50)

## Only keep the most common ceramic_type

surveys_complete <- surveys_complete %>%

filter(period %in% ceramic_type_counts$period)To make sure that everyone has the same data set, check that surveys_complete has 30463 rows and 14 columns by typing dim(surveys_complete).

Now that our data set is ready, we can save it as a CSV file in our data folder.

Page built on: 📆 2022-05-18 ‒ 🕢 12:37:25

Data Carpentry, 2014-2021.

Questions? Feedback?

Please file

an issue on GitHub.

On Twitter: @datacarpentry

If this lesson is useful to you, consider